INTRODUCING A NEW GOOGLE PLUS QUALITY METRIC: VIEWS PER FOLLOWER VPF

Google Plus recently introduced a new public metric on user profiles where they show the total number of post views the user has generated. This, in itself, is an interesting metric, because it shows how active each user is on Google Plus, and how many people have actually viewed posts they have made. But I have decided to take this number one step further, and introduce a brand new quality metric: Views Per Follower (VPF).

Views Per Follower (VPF) should be calculated as follows:

Number of Total Views / Number of Followers

That the number of total views a user has generated, divided by the number of followers the user has. The higher the VPF number, the more “reach” this user has, and, I believe, the higher the quality posts that the user is posting. It could also indicate how “engaged” those followers are, because the more engaged they are the more Google Plus tends to show that users’s posts to followers. Let’s look at some specific examples, using specific users:

Danny Sullivan (Search Engine Land):

Number of Followers: 1,757,629

Number of Views: 37,878,093

Views Per Follower VPF: 21.5

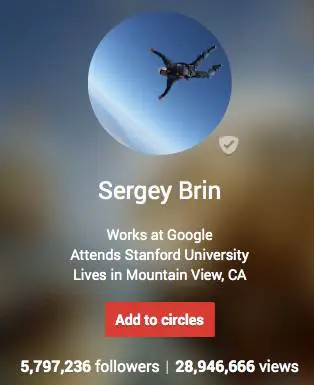

As you can see, for every follower that Danny Sullivan has, each follower has viewed an average of 21.5 of his posts. Let’s take a look, though, at another public profile, the profile of Sergey Brin from Google:

Sergey Brin (Google):

Number of Followers: 5,797,236

Number of Views: 28,946,666

Views Per Follower VPF: 4.99

And, just to be fair, let’s take a look at my personal Google Plus profile, as a comparison. Remember, the higher the VPF number, the better: which means that this user’s followers are more “engaged”, and they view more posts that the user makes.

Bill Hartzer

Number of Followers: 16,139

Number of Views: 9,163,089

Views Per Follower VPF: 567.76

Danny Sullivan’s posts on Google Plus are viewed by more of his followers, but what his numbers show are that there are a lot of followers who do NOT see his posts, and I bet that a lot of those followers aren’t very active on Google Plus. They know they should be following Danny Sullivan because, well, he’s Danny Sullivan. But they’re not very “engaged” with Danny’s posts. But compared to Sergey Brin’s followers, Danny looks really good: Sergey’s followers are really, really not very engaged, are most likely not very active on Google Plus, and just don’t see many of Sergey’s posts.

But let’s take my profile, for example. Sure, I don’t have a million or five million followers on Google Plus. But, if you look at my VPF, you’ll see that my 16,000 followers are highly engaged, active Google Plus users. They see my posts. They engage with my posts enough so that Google Plus shows them more of my posts.

There are a lot of different reasons why your Google Plus’ profile VPF is what it is, but you have to keep in mind that a quality profile on Google Plus isn’t dependent on the number of followers you have. In fact, it’s all about how engaged your users are: and by calculating the VPF for a user’s profile will show how active, how good their posts, are, and, how engaged that users’ followers are on Google Plus.

So, what’s your Views Per Follower VPF? Is yours higher or lower than mine?

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Follow him on Google Plus.

Gideon Rosenblatt has also come up with, essentially, the same type of metric, and there’s an interesting discussion going on over on his Google Plus post.

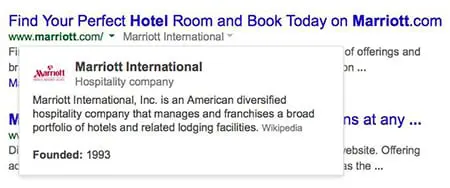

GOOGLE ADDS KNOWLEDGE GRAPH DROP-DOWN WITH COMPANY DATA TO SEARCH LISTINGS

Google has recently added a drop-down to the search results listings that include Knowledge Graph data when you click the drop-down. Here’s an example of the new drop-down:

Previously I have only seen Knowledge Graph data included in the right sidebar when you search for a company name or brand. But, now there is a small drop-down next to the site’s URL, as shown above. When you click the drop-down, it looks like this:

The data is clearly from the Knowledge Graph, although for the Marriott Hotels example above there is no source shown. I was able to see that for another query, related to software outsourcing, the data comes from Wikipedia:

The good news here, I suppose, is that it’s not huge major brands like Marriott that this is showing up for–it’s other companies that are now being included with this new Knowledge Graph drop-down in the search results. So, there’s a chance that if your company is included in the Knowledge Graph (like being in Wikipedia or Freebase.com), then there’s a chance that your site will get a drop-down like this, as well.

Google announced they were testing this back in January, but I’m personally seeing this much more now, so perhaps it’s now being rolled out to more sites’ search listings now.

GOOGLE RECONSIDERATION REQUESTS: ARE MANUAL REVIEWS REVIEWED BY HUMANS?

Google’s “manual review” process of websites who have been penalized for violating Google’s Webmaster Guidelines is far from being a “manual” process. In fact, based on the response to a recent Reconsideration Request, I am now convinced that “manual reviews” are far from that: even the Manual Review process at Google has been algorithmized and automated. Google needs to immediately change the wording on what they call a Manual Review. Because it’s not manual: humans aren’t obviously not manually reviewing websites, and they do not personally review reconsideration requests. Calling the process a manual action or implying that they manually review websites is deceptive and dishonest if Google’s employees or subcontractors don’t manually review websites.

Google needs to immediately change the wording on what they call a Manual Review if they’re not reviewed by a human.

I’m going to go out on a limb here by saying that Google’s “manual reviews” of websites are not manually reviewed by Google employees, their subcontractors, their sub-subcontractors, or even humans. Based on the results of a recent “manual review” of a Reconsideration Request based on a site’s “manual” penalty for having inorganic, unnatural links pointing to their site, there is no possible way a human reviewed the request.

Google gets about 5,000 requests for reconsideration every week:

Let’s take a look at an example of a recent Reconsideration Request I was involved in. This particular website was given a manual penalty or technically a “manual action“, or “partial match”:

Unnatural links to your site—impacts links

Google has detected a pattern of unnatural artificial, deceptive, or manipulative links pointing to pages on this site. Some links may be outside of the webmaster’s control, so for this incident we are taking targeted action on the unnatural links instead of on the site’s ranking as a whole.

So, what does this really mean? The site has links that need to be removed because they violate Google’s Webmaster Guidelines. The site is “trying to rank” for certain phrases, so they got links placed in articles on blogs and were involved in some “guest post” type of activities. Google doesn’t like that, it violates their guidelines, so needs to be cleaned up.

Fine. I get that.

So, one of the things that we do here at Globe Runner is clean up these messes, and get manual link penalties removed or “revoked” as Google likes to call it. And we’re good at it. In fact, just yesterday, we got a positive response to a Reconsideration Request, where a manual action was revoked on a site that needed its links cleaned.

Here at Globe Runner, we have a very in-depth process of identifying and cleaning up a site’s links. In fact, some other SEO companies have called our process “overkill”. But that’s fine. We always go above and beyond what could be done to remove a manual penalty from Google.

So, why do I think that Google’s “manual reviews” are not done by humans? Let’s take look more at this particular site’s reconsideration request and the what we did for this website, and then you can decide. The following is from part of a lengthy letter we made available to Google via Google Docs. We used the Reconsideration Request form in Webmaster Tools to request a review:

Overall, after gathering all of the links to the site, we ended up with:

52,252 Total Links

217 referring root domains

693 Healthy Links

709 Inorganic Links

569 “live” inorganic links to get removed (including nofollow links)

What We Did to Clean Up the Links

We manually reviewed all of the 569 links that were still “live”, which included links with the “nofollow” attribute as well as links without the “nofollow” attribute in the link.

We looked up the site owners of all 569 links, using a combination of whois lookups as well as manually visiting the sites to find the site owners. We began contact website owners on February 5, 2014, and requested that the links be removed. Around February 8, 2014, we sent second requests for link removal after we hadn’t heard from site owners who didn’t remove the links. On February 17, 2014, we sent a third round of requests for link removal after we still had not heard from site owners who didn’t remove the links.

As of February 24, 2014, we have heard from 296 site owners. Of those site owners who responded:

– 12 sites refused to remove the links

– 257 links were successfully removed based on our email requests

– 27 links – site owners requested payment to remove the links

– 2 links – we were unable to contact site owners

– 274 links – site owners were completely unresponsive after 3 contacts

– 256 links were removed as a result of our link removal efforts

We prepared, and uploaded, a new disavow file that includes the links whose site owners were unresponsive, site owners we were unable to contact, and site owners who requested payment for removal.

So, after hearing from 296 site owners who responded to our numerous emails, we got 256 links removed. Keep in mind that is of the total 52,000 links we found: but in reality many were site-wide links and links that no longer existed, so there were actually only about 4000 links total. And we provided a spreadsheet of every single URL and included all of the email responses we received, close to 300 emails!

Google denied this reconsideration request. In fact, not only did they deny this reconsideration request, but they were “helpful” and included sample URLs that they had a problem with. They provided 3 sample URLs. Google does this to help point out the types of links that they have a problem with.

The 3 sample URLs provided were URLs included in our spreadsheet. Not only that, the sample URLs included domains where the site owner specifically told us that we must pay to get the link removed. Which is documented in the reconsideration request, along with copies of the emails we received. Not only that, all of the sample URLs given to us by Google in response to our reconsideration request were disavowed because we could not get the links removed (again, documented in the response).

After seeing the efforts that we went through to get hundreds of links removed, documented, and even included the actual emails, Google told us that URLs they had a problem with are ones that we couldn’t get removed.

I’m convinced that there Google’s so-called “manual reviews” of websites are not actually reviewed by Google employees. If that were the case, then would they have included sample URLs that we documented that we could not get removed because a site owner wants payment? I realize that Google gets over 5,000 reconsideration requests every week, and there are a lot of sites to deal with. So part of the Reconsideration Request process has to be automated. But to continue to deceive website owners into thinking that a human at Google is going to manually review their request is misleading.

As for this particular site, I believe this is an isolated incident, as we get plenty of positive responses to our reconsideration requests based on the work we do to clean up links. We’re working on finding more “bad links” and “inorganic links” to this site, and will continue to get more links removed. And at some point we will file another reconsideration request once we’re convinced we’ve gotten all the links removed or taken care of in some way (perhaps by disavowing them and documenting why they’re disavowed).

Whatever the case, Google claims that every reconsideration request is reviewed by a human. Here’s some further reading if you’d like to see what’s been addressed in the past:

Google Reconsideration Responses – SE Roundtable

Google: We Get 5,000 Reconsideration Requests Per Week – SE Roundtable

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Connect with him on Google+ or on Twitter as Bhartzer.

Update: 5:16pm CST March 5, 2014

Google confirms that they do, in fact, manually review reconsideration requests. Here’s the tweet by Nathan Johns:https://platform.twitter.com/embed/index.html?dnt=false&embedId=twitter-widget-0&frame=false&hideCard=false&hideThread=false&id=441350840603779072&lang=en&origin=https%3A%2F%2Fgloberunner.com%2Fgoogle-reconsideration-requests-manual-reviews-reviewed-humans%2F&theme=light&widgetsVersion=219d021%3A1598982042171&width=550px

DIGITAL MARKETING NEWS: FEBRUARY 2014

Digital Marketing News: February 2014

As a digital marketing specialist, it is absolutely necessary for me to stay up-to-date with the latest industry news, updates, and consumer technology trends. In February, I found the following articles to be especially noteworthy or interesting.

Link Building

‘Link Building in 2014 is All About Your Brand & Reputation‘ is a great article discussing the impact Google search algorithm updates can have on businesses, especially small and medium sized businesses. The writer gives a detailed and easy to read description of past algorithm updates which directly targeted specific link building strategies. Quoting sources such as Matt Cutts, Google’s Head of Webspam, this article explains why it’s important to include branding more than ever when developing a digital marketing strategy and link building campaigns. My favorite quote from the reading:

If you read the interview carefully, you’ll see that it’s a blueprint for content marketing. Basically, it lays out the components of how you can create great content on and off your site, promote it with strong social media presences, press releases, and other tactics to build a great brand online.

Keyword Research

Another article, ‘Keyword Research After The Keyword Tool, (Not Provided) & Hummingbird Apocalypse‘, describes the “Keyword Apocalypse” and how to move forward and understand the new era of keyword research. Because of the destruction of valuable keyword data and tools which were used to make data-driven and justifiable decisions, digital marketers now have to adjust to a new Google and a new tool set.

Google shifted to a new algorithm called Hummingbird in late August, 2013. I found trying to describe the updated algorithm to somebody who doesn’t work in the digital marketing industry to be fairly difficult. This article includes the easiest-to-understand description of Hummingbird I have read:

In fact, the only thing that Hummingbird changed was how the actual search query was processed. In short, Google rewrites your search query. [What’s a good place to get Chinese food?] might be rewritten as [chinese food canton ohio].

The author then goes into detail about the correlation between words and how that relates to your keyword research and content strategies. He introduces readers to new and existing tools that can help discover keyword phrases, search volumes, synonyms, and more. He does a great job of explaining how to perform thorough keyword research using techniques that will support your online presence and marketing initiatives moving forward.

Keyword research is still very important, but knowing your user is more important. If you pay attention to your users and listen to what they have to say, you’ll discover plenty of valuable information that can be used to develop great marketing strategies used to attract the customers you want.

Google AdWords

A third article titled ‘The Real Reason AdWords Isn’t Working For Many Small Businesses‘ is a good read because it describes some common misconceptions with Google AdWords. Evidently, the article is a response to a New York Times write-up which describes AdWords as not being practical for small business. The author uses the examples from the New York Times article to show the usual mistakes AdWords customers make and why they struggle to find success with AdWords. My favorite quotes are:

“Here’s where the biggest disconnect occurs for many people when it comes to PPC. It’s not about the cost per click – it’s about how much effort advertisers put in.”

“…dismissing paid search as a customer acquisition strategy would be crazy. Why? Because for many AdWords customers, the cost of the ads isn’t the issue.”

In the author’s opinion, the top three mistakes that cause an unsuccessful AdWords campaign as the following:

1) Infrequent Logins

2) Not Enough Activity

3) No Negative Keywords

I’ve witnessed great success with Google AdWords and believe it should be a part of any company’s digital marketing strategy. The information that can be gained from AdWords is almost invaluable. You can discover converting categories, sticky keywords, niche online communities, and more.

ARE SITES OUTRANKING YOU WITH YOUR OWN CONTENT?

If you answer yes to this question, then you have a potential search engine ranking problem on your hands. But the good news is that it can be fixed. If you are seeing other websites outrank (or show up higher in the search results than your site) and they appear to have copied your website content, there definitely is a problem. Here is what you need to do in order to fix this situation.

Google has released a new form that allows sites to report scrapers.

To make sure that you’re really experiencing someone who is copying your content and using it without your permission, we first need to check.

1. First, you need to see if someone has truly copied your content. Take one of the URLs that you suspect it’s happening to (a page on your site) and run it through CopyScape.com. This will show you if any of the pages they find on the web are copying your content. If the site outranking your content shows up in this list, then there’s a good chance you have an issue.

2. Next, you need to take the title of one of your pages. I prefer to use the title tag, but it could be a very unique headline (such as the title of a blog post) and put it in quotes and search for it in Google, like this:

"Link Removals: Sometimes Websites Get Taken Down"

That’s the title of my last blog post here on this blog, so I thought that I would go ahead and use it as an example. When you search for the title in quotes (hopefully it’s unique) then you should only see your page or your blog post. But, if something else shows up in the search results, then:

- The phrase in quotes you searched for might not be unique enough. Try a sentence from your page.

- You might have shared the post on social media sites like Google+, Twitter, or your Facebook page. That’s okay.

- The content that’s stolen or copied from your site might be showing up

If it’s the last case, that your content appears to have been taken or copied, then there are generally a few reasons why:

- Your site is not as trusted as the other site. Unfortunately, sometimes if you have a fairly new site or a site with Google Penguin issues then the other site (the site scraping/copying/stealing your content) is going to rank better.

- The other site has higher PageRank than your site, and has more links or more trusted links than your site.

This last issue can be a problem. Because the other site has higher PageRank, it gets crawled and its content gets indexed faster than your site’s content get crawled and indexed. Therefore, the way Google’s duplicate content filter works is that whatever page or site gets crawled first, that’s the originator of the content. Then, if Google finds other copies, then those won’t rank as well as your site’s content.

One good way to deal with a situation like this is to get more trust and authority for your website. I know that can take time, and it involves getting new trusted and authoritative links to your website. It’s not an easy fix.

Another way to deal with this, though, is something that you can do RIGHT NOW. Whenever you add new content to your site, like a new page or a new blog post, you must immediately go over to Twitter and/or Google+ and post something about it. Include the original URL of the page, and not just a “tiny url”. It needs to be the full URL if possible. Twitter will change it to a tiny URL, and that’s okay. Google Plus is also important, as well. When you post, Google finds the new URL and will crawl it. This will minimize the chances that the other site will get your content posted and crawled.

If you are using WordPress, and you make a blog post, there are settings in WordPress that will cause your site to send a “ping” to Google (and other search engines and sites) to notify them of an update. It will send them the new URL. This also will help cause Google to crawl your new page.

Another way to deal with copied content that’s outranking you is to file a DMCA takedown request. Find out more about the Digital Millennium Copyright Act, which, when filed properly with Google and the site’s web host, will cause that content to be taken down. Google will remove the page for their search results and the site’s web host usually complies by removing the content, as well. They might even remove the entire website.

Finally, if you are having trouble with Google search engine rankings of your content and there is a scraper site involved (someone copying your content and outranking your site) then you can use Google’s new Scraper Tool to notify them of the problem. This doesn’t mean that they’re going to do something about it right away, but they may use that information in a few possible ways:

- Google may use the data to program a new part of their algorithm. Very likely.

- Google may use the data to remove the other site from their search results. Highly unlikely.

Whatever the situation, Google will use the information you give them somehow. I would first deal with the situation by doing several or all of the tasks I mentioned above. But then you might want to report it. That might, at a minimum, just give you a “warm and fuzzy feeling” that you reported it to Google.

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Connect with him on Google+ or on Twitter as Bhartzer.

LINK REMOVALS: SOMETIMES WEBSITES GET TAKEN DOWN

One of the services we provide here at GlobeRunner is a process of cleaning up a website’s links. Due to the recent Google Penguin algorithm updates in the past year or so, a lot of sites have suffered dramatic search engine ranking losses and have had severe hits to their site traffic. One thing that we do very well here at Globe Runner is get a site’s traffic back due to Google penalties.

The Google Penguin algorithm update determines whether or not you have low quality links or “questionable” links pointing to your website that attempted to manipulate your site search engine rankings. If you have low quality links pointing to your website, you need to get those links removed. Not only do you need those links removed, you can also disavow the links.

During the link removal process that we go through, which is extremely thorough, we come across some pretty strange situations. In fact, in some extreme cases, we may not mean to get a website banned or taken down by the site’s web host. But, sometimes that happens. One such situation occurred, and now, when you go to the site, you see this message:

Well, I guess we got the link removed, ya think?

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Connect with him on Google+ or on Twitter as Bhartzer.

HOW TO KNOW IF A WEBSITE HAS DISAVOWED LINKS

By now, you probably have heard that if you do not like the websites that are linking to your website, you can tell Google (and Bing.com) that you don’t like them. You can upload a file with a list of domain names or URLs of sites that they should not take into account when considering the links to your website. Google has extensive information about the disavow links process here.

Keep in mind, though, that it is impossible to know if a website that you do not own has disavowed links or not.

But how do you know if your website has disavowed links already? I know that can sound a bit weird, but to be honest with you it’s not a weird question. In fact, with so many different people working on websites nowadays, and companies hiring various search engine marketing and switching SEO companies all the time, it’s sometimes not an easy question to answer. Sometimes you don’t actually know if a disavow file has been uploaded in Google Webmaster Tools. And even if someone “thinks” or “knows” that they uploaded one, there’s no telling what domains or links were disavowed.

So, it can be confusing, but there is a really easy way to figure out:

– if a disavow file was uploaded

– what the file looks like

It’s also helpful, for example (or mandatory) that you have the actual disavow file that was uploaded, especially if you are going to analyze the links again and possibly submit a new disavow file. Usually I just add on to the current file after removing the duplicates. But again, you have to have the last disavow file that was uploaded.

Here’s how to find out if your site has disavowed links.

First, go to the disavow links tool. On that page, you’ll see a list of websites that you’ve verified in Google Webmaster Tools. The following is a screen capture of what it looks like, for my personal blog/website:

Select your site’s URL and then click “DISAVOW LINKS”.

Then, click the disavow links button again:

Once you click that button, you’ll be taken to a page where you’ll be notified when you last uploaded a disavow file, as shown below. If there a file has been uploaded, then great: you’ll see a download button, as shown below:

If you don’t see a date and time and a download link, then the site does NOT have a disavow on file with Google.

By the way, have I mentioned that we’re experts in dealing with disavow files and helping websites recover from Google linking penalties? Feel free to contact us if you have questions about the process or need help. Remember, if it’s not done right you can easily hurt your site’s rankings even worse than they are now.

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Connect with him on Google+ or on Twitter as Bhartzer.

WHAT’S HOT ON GOOGLE+ REMOVED, REPLACED WITH EXPLORE TAB

Google Plus continues to make changes to their interface by making feature changes and moving content and options around. I am not surprised, as Google is constantly tweaking their search results pages for a better user experience. So why shouldn’t they do this with Google Plus?

The What’s Hot and Recommended on Google+ feature, which previously was available on the “Home” drop-down menu, has gone away. Now, this content appears on the Explore tab.

As you can see, my personal list of tabs is “SEO” and “Dallas” circles, and then it shows the “Explore” tab. By clicking the Explore tab, you’ll see the most popular posts on Google Plus, from other users (other than from your own circles).

What’s interesting to note is that while Google+ has removed the What’s Hot page (option), they have not updated their online help for this option. It still says that you can find the What’s Hot content by going to the Home drop-down list:

To see What’s hot: Choose Explore What’s hot from the Google+ navigation menu.

Apparently now Google+ doesn’t want us to know that this content is hot, they want us to Explore the content. It’s not a really big difference between the two, but there is a connotation that the “What’s Hot” content is more “exciting and thrilling”, because it’s “What’s Hot” rather than actually content that is something that we should just “Explore”.

What I’d like to know is that when they made this change from What’s Hot to Explore, has the number of shares and +1s on this content gone up or down?

Here’s a tip: If you go to your Explore tab on Google+, then these are the hottest or most-shared recent posts on Google+. To build your following and connect with influencers, you’ll want to share this content and connect (and follow) these people. You can also look at the Ripples of these posts and see who the influencers are and connect with them, as well.

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Connect with him on Google+ or on Twitter as Bhartzer.

HOW TO: FIND ALL THE POSTS BY A GOOGLE AUTHORSHIP VERIFIED AUTHOR; WITH ANALYTICS

Writers often write for more than one blog or website. Good writers are in demand, so they will tend to write for several different publications. When it comes to Google Authorship, those writers who have adopted Google Authorship and accepted it as the norm now will often verify their authorship on multiple websites. Wouldn’t it be nice to be able to see just how good that author is? Wouldn’t it be great to see what other blog posts or articles they’ve written? Well, now you can search Google and find all of the articles that a verified Google Authorship author has penned. And, combine that with certain analytics, you can see how great their articles really are.

When an author verifies their Google Authorship, they must list the websites that they contribute to or write for in their Google Plus profile. If you were to go to my Google+ profile, you would see a list of websites where I have verified my authorship. I personally list a lot of sites, especially sites that I own, as well as my blog and the company blogs where I have written and write. But what if you wanted to see a list of articles or blog posts that I’ve written and verified my authorship? Let’s use myself as an example and I’ll show you exactly how to do that.

First, you have to find one of the articles or blog posts that I’ve written. That’s generally not very difficult, just find somewhere in the Google search results were my Google Authorship photo snippet appears, like below:

Next, you’ll need to click on the link where it says “By Bill Hartzer”, as shown below:

You are then taken to another Google search results page, where you have more posts that I have written. In the search box at the top, remove everything (the text) except for the author’s name. I have scratched out what you need to remove, in red, below:

Notice that the author’s name is in Blue in the search field. Once you remove the text and leave the author’s name, you can search again. Below, you will see the result of that:

As you can see, even though I searched originally for a post that appeared on the Globe Runner blog, you now see a search result that includes all of the posts where I have verified Google Authorship. And most likely, the “best” search result (the most appropriate one) will be the first one. And the better posts will most likely rank better in the Google search results.

So, what if we were to add in some analytics to these search results? What if we turned on a Firefox add-on that shows some stats and analytics about each of these posts? Well, let’s do just that. Here’s a screen capture of my posts and articles where I’ve verified Google Authorship and have the SEO Quake Firefox plug-in installed:

Now, we can see the posts where I am the verified author and you can see just well I write, how my posts tend to get links, and you can even see other data like PageRank, number of links to the post, and other interesting analytics.

Bill Hartzer is Globe Runner’s Senior SEO Strategist. Follow him on Google Plus.

- « Previous Page

- 1

- …

- 33

- 34

- 35

- 36

- 37

- …

- 44

- Next Page »